Metrics, Metrics: How We Improved our Website Performance, Part 2

This post first appeared on the Big Nerd Ranch blog.

In Part 2, we continue down the rabbit hole of web optimization. Make sure to check out Part 1 for background on the metrics we’ll be investigating!

How do we level up?

Although our site was certainly not slow (thanks to the static build), several metrics showed it could benefit from web optimizations.

| Page | PageSpeed Score |

|---|---|

| https://www.bignerdranch.com/ | 61/100 |

| https://www.bignerdranch.com/blog/ | 56/100 |

| https://www.bignerdranch.com/work/ | 65/100 |

Our top three pages were hefty for a site that's primarily text-based:

| Page | Total Page Weight |

|---|---|

| https://www.bignerdranch.com/ | 1.7 MB |

| https://www.bignerdranch.com/blog/ | 844 KB |

| https://www.bignerdranch.com/blog/any-blog-post/ | 830 KB |

A total page weight of 1.7 MB for the homepage could negatively impact our mobile users (an ever-growing audience), especially for those browsing on their data plan.

What Did You Do?

Blocking resources like scripts and stylesheets are the primary bottleneck in the critical rendering path and Time-To-First-Render, so our first priority was to tidy up blocking resources. We audited our usage of Modernizr and found we were using only one test, so we inlined it. As for the blocking stylesheet, we just minified it with Sass’s compact mode for a quick win. With those changes, we reduced the “blocking weight” by 33% across all pages.

So what? The faster blocking resources are downloaded (and stop blocking), the sooner the page will be rendered. That will significantly reduce Time-To-Visual-Completeness.

Images

Next, to speed up Time-To-Visual-Completeness, we targeted the size and number of non-blocking requests, like images and JavaScript. We used image_optim to losslessly shrink PNGs, JPEGs and even SVGs; since images are our heaviest asset, this cut down size dramatically (sometimes as much as 90%) by taking advantage of LUTs and nuking metadata. The homepage banner was particularly large, so we opted for lossy compression to cut the size in half. The quality difference is almost unnoticeable, so it was a worthwhile tradeoff.

JavaScript

Script optimization took a little more thoughtfulness: we were already minifying scripts, but different pages loaded up to five files (libraries and “sprinkles” of interactivity). jQuery and its host of plugins comprised the largest payload, so we scoured Google Analytics to determine which versions of Internet Explorer we needed to support.

IE9 and below accounted for 5% of our traffic on top pages, but on pages that depended on jQuery (those with forms like our Contact page), IE9– made up less than 4% of traffic (only a fraction of those visitors used the form). Armed with these statistics, we opted to support IE10+ with jQuery 2. Still, this only shaved 10 KB, and jQuery’s advanced features were really only used by forms.

However, by dropping IE9– support, we were able to drop Zepto.js into all our pages. At 25 KB, Zepto is tiiiny; the remaining pages with forms pull in jQuery 2, but all other pages can opt for the economical Zepto library instead.

Our own JavaScript got some tidying: latency was the limiting reagent for our (very small, <6 KB overall) scripts, so we opted to concatenate all our JavaScript into a single script. We also made sure to wrap all the files in an IIFE to help the minifier tighten up variable names. In the process, we discovered some unnecessary requests, like blog searching and external API calls.

HTML

For completeness, we added naïve HTML minification. To get the most out of this step, you should use a whitespace-sensitive templating language like Slim or Jade. Still, with GZIP compression enabled, the win was minor and made economical sense only because it was a quick addition.

Server tweaks

After optimizing actual resource size, static servers like Apache and Nginx can help further reduce over-the-wire size and the number of requests.

We enabled compression (DEFLATE and GZIP) for all text-based resources:

<IfModule mod_deflate.c>

# Force compression for mangled headers.

# http://developer.yahoo.com/blogs/ydn/posts/2010/12/pushing-beyond-gzipping

<IfModule mod_setenvif.c>

<IfModule mod_headers.c>

SetEnvIfNoCase ^(Accept-EncodXng|X-cept-Encoding|X{15}|~{15}|-{15})$ ^((gzip|deflate)\s*,?\s*)+|[X~-]{4,13}$ HAVE_Accept-Encoding

RequestHeader append Accept-Encoding "gzip,deflate" env=HAVE_Accept-Encoding

</IfModule>

</IfModule>

# Compress all output labeled with one of the following MIME-types

# (for Apache versions below 2.3.7, you don’t need to enable `mod_filter`

# and can remove the `<IfModule mod_filter.c>` and `</IfModule>` lines

# as `AddOutputFilterByType` is still in the core directives).

AddOutputFilterByType DEFLATE application/atom+xml \

application/javascript \

application/json \

application/rss+xml \

application/vnd.ms-fontobject \

application/x-font-ttf \

application/x-web-app-manifest+json \

application/xhtml+xml \

application/xml \

font/opentype \

image/svg+xml \

image/x-icon \

text/css \

text/html \

text/plain \

text/x-component \

text/xml

</IfModule>

Since we enabled cache busting (e.g. main-1de29262b1ca.js), we bumped the Cache-Control header for all non-HTML files to the maximum allowed value of one year. Since the filename of scripts and stylesheets changes when their contents change, users always receive the latest version, but request it only when necessary.

<IfModule mod_headers.c>

<FilesMatch "(?i)\.(css|js|ico|png|gif|svg|jpg|jpeg|eot|ttf|woff)$">

Header set Cache-Control "max-age=31536000, public"

</FilesMatch>

</IfModule>

Show Me the Numbers!

We expected dramatic improvement across various metrics after enabling GZIP and aggressive caching. With blocking scripts nuked from the head, Time-To-Visual-Completeness should drop to within our 2-second goal.

Page weight

Sizes are of GZIPed resources.

| Page | Resource type | Before | After | Percent |

|---|---|---|---|---|

| All | Blocking (JS/CSS) | 21.2 KB | 14.7 KB | 31% smaller |

| https://www.bignerdranch.com/ | Scripts | 39.8 KB | 11.9 KB | 70% smaller |

| https://www.bignerdranch.com/ | Images | 1.1 MB | 751 KB | 27% smaller |

| https://www.bignerdranch.com/blog/ | All + search | 844 KB | 411 KB | 51% smaller |

| https://www.bignerdranch.com/blog/any-blog-post/ | All | 830 KB | 532 KB | 36% smaller |

We cut our average page weight in half!

Google PageSpeed score

Scores are for mobile platforms.

| Page | Before | After | Improvement |

|---|---|---|---|

| https://www.bignerdranch.com/ | 61/100 | 86/100 | +25 |

| https://www.bignerdranch.com/blog/ | 56/100 | 84/100 | +28 |

| https://www.bignerdranch.com/work/ | 65/100 | 89/100 | +24 |

We addressed all high-priority issues in PageSpeed, and the outstanding low-priority issues are from the Twitter widget. On desktop, our pages score in the mid-90s.

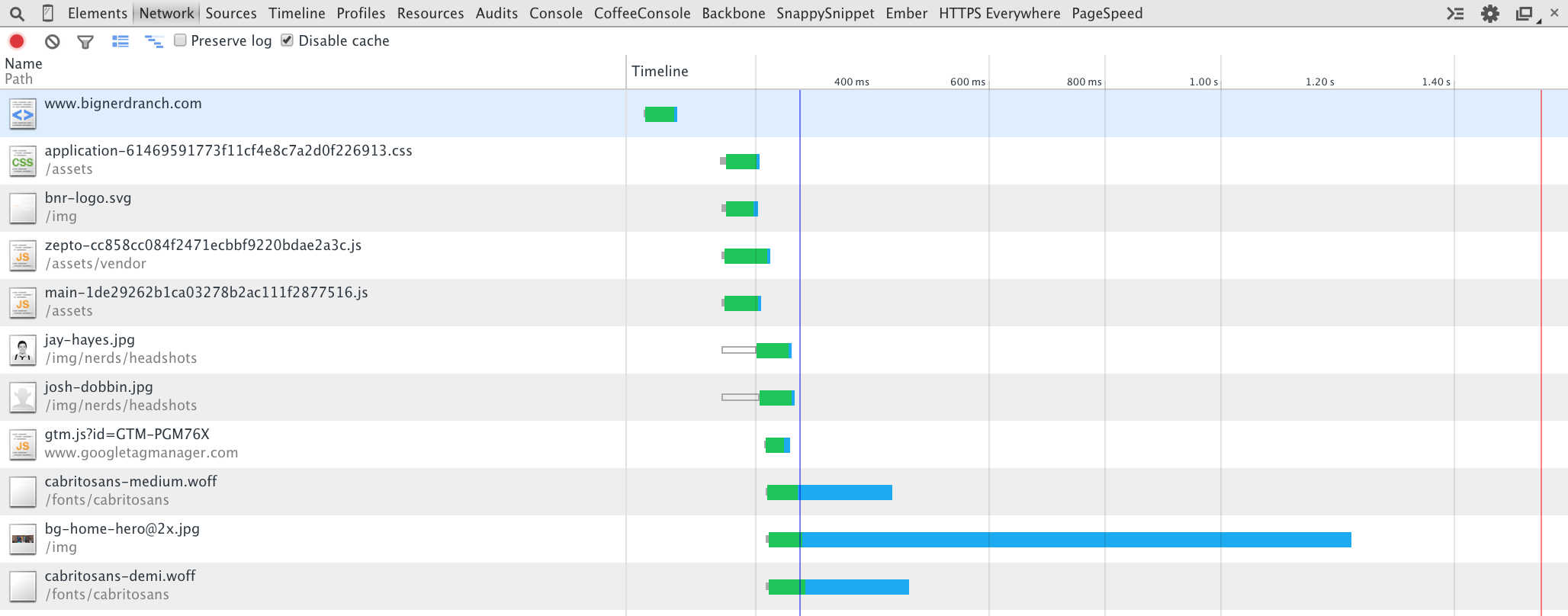

WebPageTest

Bolded links indicate repeat visits to the page (caching performance).

| Page | Total Load Time | Time To First Byte | Time To Visual Completeness |

|---|---|---|---|

| https://www.bignerdranch.com/ | 2.185s | 0.210s | 1.996s |

| https://www.bignerdranch.com/ | 1.314s | 0.573s | 0.669s |

| https://www.bignerdranch.com/blog/ | 2.071s | 0.188s | 0.696s |

| https://www.bignerdranch.com/blog/ | 0.850s | 0.244s | 0.371s |

| https://www.bignerdranch.com/work/ | 2.606s | 0.395s | 1.088s |

| https://www.bignerdranch.com/work/ | 0.618s | 0.207s | 0.331s |

TTFB tends to be noisy (ranging from 200 to 600 milliseconds), and thus Total Load Time varies drastically. However, the most important metric, Time-To-Visual-Completeness, is now consistently under 2 seconds for new visitors on all pages. And thanks to more aggressive caching, repeat visitors will wait less than half a second to view the latest content.

That’s a Wrap

The results of the audit proved delightful: we cut the average page weight in half and significantly improved the Time-To-Visual-Completeness. In the future, we will be evaluating other optimizations for bignerdranch.com:

- Inline core CSS styles: our stylesheet still blocks the parser, but inlining can complicate source code.

- Switch to Autoprefixer and Clean CSS instead of relying on Sass’s compact mode.

- Serve assets over CDNs or inject proxies to optimize delivery.

- Use SVG sprites for various logos, like social media icons.

- Switch to Nginx from Apache. Nginx is compiled with modules, so it tends to use less memory and is better with heavy traffic. Since our site is static, the server is the limiting reagent in TTFB.

- Enable SPDY and HTTP2 to further cut down latency with request multiplexing.

mod_pagespeedto automate CSS inlining and other improvements.- Speedier Node-based build. Jekyll greatly improved our internal publishing process, but we’ve felt some major architectural frustrations. We foresee bringing our expertise with Node-based pipelines to our internal build process.